This talk concerns:

- Web

- More than native software

- Infrastructure

- More than product development

- Independents (and by extension, small agencies)

- More than in-house

The purpose of this talk is to generate some new ideas about how we think about the business of making things on the Web.

Why you should listen to me:

I have been making things on the Web since 1995 (age 15-16)

Since then I have taught myself, through hands-on experience, (and in rough chronological order):

- visual design

- front-end dev

- back-end dev

- sysadmin

- DBA

- data viz

- information architecture

- interaction design

- content strategy

- …

This makes me a unicorn:

Though not altogether the force of nature touted by the likes of Jared Spool.

But it does afford me a perspective of being able to look across both the medium and the way that medium gets developed.

Let me explain:

Blue-chip projects can afford to pay for specialists in both dollars and time.

Unicorns don't need any help for anything, as long as the workload isn't too overwhelming.

This makes us unicorns valuable at the low end.

And low-end is where I've spent the last six or so years.

Like many of you here, I'm sure, I've oscillated back and forth between in-house and indie.

A few years ago, I finally made up my mind and went indie for good.

After a lot of introspection, it came to me that autonomy was a necessary condition for performance, and autonomy, to the degree that I needed it, proved much too costly to negotiate into a conventional employment contract.

But this is not an informercial for going indie, this is about the practice and the business of making things on the web.

One could argue that I chose the perfect time to quit my nice cushy R&D job:

Even though we didn't get hit by the crisis nearly as badly in Canada, businesspeople were scared to spend any money they didn't absolutely have to.

The only people I could find who were doing any work during the crisis, managed small, non-profit entities like unions and professional associations.

These organizations tend to staff up to about a dozen people or so, and run annual budgets in the low millions.

They can afford some development, but they can't afford the massive overruns endemic to our industry.

Moreover, these are not sophisticated players, and scarcely understand what the Web is, let alone what it can do for their organizations.

But, I thought, there are thousands of them. In fact, of the nearly 8 million business entities in Canada and 28 in the US, over half of them have fewer than 20 employees.

Companies:

- 8 Million in Canada

- 28 Million in the USA

- Most are not

tech- Most are too small

- But plenty are big enough

We think of these entities as being largely inacessible to us, and that's where the software-as-a-service market comes in. But SaaS doesn't save these people any money; rather it just amortizes the cost.

Furthermore, SaaS companies tend to require a commitment of a certain period, say 5 years, or an implicit lock-in because they have all your data. What if nobody uses the system because it is a user experience nightmare?

One of my clients is in exactly this predicament. He's shelling out money every month to a Web-based infrastructure vendor that his staff has completely abandoned, and he wants to get rid of it.

We went and looked at competing SaaS vendors and did the math: $100,000 with a 5 year commitment. One of them wanted $35k up front.

He asked me: if we go with one of these vendors, how will we know that we won't end up in the same predicament? Moreover, if we spend that money (and probably more) at an agency, how do we know, after a year of development, that we won't be in the same predicament then?

My answer: Simple. You do UX design.

There's only one problem: the way we do UX these days means he pays $50k for a PDF full of design recommendations. Implementation is extra.

This is somebody I've managed to educate on the value of UX, but a fifty thousand dollar document is a non-starter.

What we're looking at here is a huge potential market for user experience design if we can figure out how to dispense our services in smaller increments.

The problem is that our business practices are pinned to the app or the website as the unit of commerce, and our processes and tools reflect that.

Sure, you can be as Lean or Agile as you want within the confines of a project, but at the end of the day, we're typically either bidding on entire website projects or billing hours for pieces of them.

Middleware like Drupal is designed to take over the entire site. The same goes for the more fundamental Web frameworks: Rails, Django, Catalyst, etc.

These technology products then feed back into the business aspect, dictating what can and cannot be done.

The tendering process, as it is still practiced today, effectively generates a list of features, and those features are priced around whether or not there is an existing module that supplies the functionality. The features are then summed up, and you get the price for the whole project.

Common practice is then to take one third to one half of the money up front, then optionally a progress payment in the middle, and the rest on completion.

This makes for huge pressure to get things done on schedule. Since the item being delivered is code, development gets first priority and user experience design gets squeezed out.

Moreover, how much of a cash cushion does an agency need to keep around for contingencies? What if the client doesn't pay on time, or at all? We've all heard the horror stories, even if we're lucky enough to not have been present for them.

And finally, before I move to the next section, what about the client? You deliver the features, but the overall UX has largely been determined by the behaviour of third-party modules. What if it sucks?

The issue I believe we all need to consider is that of risk.

We can think of risk as a three-dimensional object:

- Probability

- The likelihood an event (bad or good) will occur,

- Magnitude

- The severity of the event,

- Horizon

The instant in the future when the event will either have occurred or is no longer a possibility.

What is the likelihood of an event occurring, during the year or so of a decently-sized Web project, that will seriously impinge on the agency's ability to earn a profit?

Delays are almost certain.

Scope creep is almost certain.

People get sick, they get in accidents, they go on vacation.

It occurs to me that the only agencies that can reliably earn a profit under the incumbent contractual model are either enormous, or they have their offerings on lockdown.

They're like McDrupal: they only serve what's on the menu.

What are the chances the menu will align perfectly—or even satisfactorily—with the needs of the client and their users?

This state of affairs is what Nassim Taleb calls fragile.

Everything has to go perfectly according to plan, or the forecasted outcome is compromised.

What if, as Taleb suggests, we made our endeavours antifragile? What if we arranged the business of creative work so that unpredictable events actually helped?

We've all seen the triangle: you can have it fast, cheap or good, pick two.

There are two problems here: one is that time and cost are bound to one another, since there are no materials to buy, and no labour to speak of aside from problem-solving. What you're paying for is to keep people sustained while they hash out the problem set.

The other problem is that you can't make design—or implementation for that matter—go any faster than it takes to solve any one particular problem, which is completely unique and idiosyncratic to the context.

Design, unfortunately, costs what it costs what it costs.

That isn't to say that it has to cost more, though design will cost more, up front at least, than no design. It's an entirely new category of cost.

We do know, however, that design leads to fewer mistakes, less vacillation and less confusion during implementation, lowering costs and dramatically increasing value, which is a good chunk of the reason why we do it.

So we can say that the role of design is to eliminate extraneous decision-making on the part of implementers—decisions that they would rather not have to—or even be equipped—to make.

We can likewise say that the task of design is to gather, concentrate and represent those decisions in a manner which is accurate to the shared goals of the business and its users, comprehensible to implementers and plausible to everybody.

- Gather information

- Concentrate it into a set of design decisions

- Represent it…

…so that it is:

- Accurate to the shared goals of the business and its users

- Comprehensible to implementers

- Plausible to everybody

If information is the lifeblood of our practice, then to continue we need a phenomenology of information.

That is to say: we need a robust—but not overly prescriptive—theory for what is actually happening within and between people during the design process.

Information is tied to the state of bit of physical stuff in a particular region of space and time, and only then under specific conditions. Sometimes that information is buried inside other information, or doesn't even exist anymore, or yet.

If you need a particular piece of information, you have to do whatever it takes to go and get it.

If this were not true, then humanity wouldn't need to spend two decades and billions of dollars (Euros) building this:

If all it took to get useful information about the real state of the world was to sit in your office and think about it, projects like the Large Hadron Collider would be a colossal waste of effort.

We can therefore think about the process of gathering information much like a scavenger hunt.

You arrive at the first clue, which tells you to go across town to fetch the second clue, which promptly tells you to return to fetch the third clue within a few feet of the first.

Had you known, you could have scooped up the first and third clues immediately, and saved yourself a trip.

But the information about the third clue, when you were standing just feet away from it, was locked away in the future.

The character of this process is what the wonks call stochastic, and this particular behaviour is what they call path dependence.

If you are doing any kind of design, scientific inquiry, or literally any problem-solving that is not a completely closed system like a jigsaw puzzle, then this is your reality.

This inherent property of information is the number one thing that stands between you and any promises you might make about when things will be done and how much it will cost.

Once we have our information, we then have to figure out what to do with it.

We can think of the abstract problem of design as a hairball of discrete considerations, related to one another on the basis of either mutual support, or conflict.

It should be a truism, furthermore, that the way to solve a complex problem is to break it apart, recursively, into an array of simple problems, solve those one by one, and then integrate the whole.

The problem, or I should say, meta-problem here, is that the articulation points—the best places to cut the hairball—are not immediately obvious to us. This situation gets untenable when the structure grows beyond even a trivial size.

If we divide the problem up into arbitrary categories, we run the risk of cutting across a multitude of concerns, which inevitably yields a bad experience and ultimately a poorly-performing end result.

As such, cutting up a project by feature, or by a website's main navigation, is almost certain to give us this unwanted effect.

The good news is that the process of deconstructing hairballs of this sort is a well-understood mathematical problem.

So here I'm tempted to say, great, let's code up a tool to help us with this.

The bad news is that in practice, we still have a bottleneck waiting for all the design considerations to come in so we can compute the decomposition pattern, and any new information that trickles in is likely to yield a completely different pattern overall.

In lieu of an exhaustive solution, I propose a simple heuristic: divide the project, at least loosely, in terms of the distinct goals of prospective users.

What makes it simple is that in any software system, users don't directly interact with one another except for when and where they do.

So at any time, you're only ever dealing with the mutual concerns of a small subset of users, which, if that number isn't one, can in practice be decomposed into sets of two.

This heuristic won't be as optimal as a pure mathematical solution, but it's a considerably smaller problem to tackle than an entire app or website, and considerably more optimal than cutting up the work by feature or section.

Now, I don't expect the idea of organizing around user goals to be new to anybody, but what I will argue in a moment is the need to bring this idea up to the level of the business arrangement.

Before that, however, I need to tackle one more issue in our phenomenology of information.

One of the main benefits of being able to cut up a complex problem is that we can hand the pieces off to other people so that they can work on them in parallel.

There are three problems with this:

The first is that the relationship between the number of people on a project and the communication channels between them is exponential.

Specifically, that relation is (N^2-N)/2.

What this means is that you have to break larger projects apart in such a way that people don't have to communicate with each other very much, at least relative to the total amount they could possibly communicate.

It also means you want as few people working on a distinct sub-problem as you can get away with.

The second issue is that the specific expertise of all the players involved (UX research, interaction design, information architecture, visual design, front-end development, back-end development, etc.) tends to cut across the concerns of actually realizing a result.

What this means is that an individual practitioner's specific involvement in the realization of a user goal is going to vary wildly, both relative to each user goal, and the identified interactions between user goals.

However, we can understand the development of a response to a user goal as a sequence of increasing detail, that progresses from a rough sketch in vernacular language that anybody can understand, to a set of instructions so precise that they can be executed by machine.

Since, in practice, we can't hire a person to do a tiny job and then let them go, only to hire them again for a tiny job later, we should expect to have to temporarily abandon our work on a specific user goal and pick up another one, such that we accumulate enough work for the appropriate specialist to do all at once.

In industry parlance we call this a pivot, so what I'm saying here is that we have to be aggressive in our stance toward pivots and treat them as the norm.

The final problem in this section is that of negotiating common language between different people, so that we can divide tasks among them.

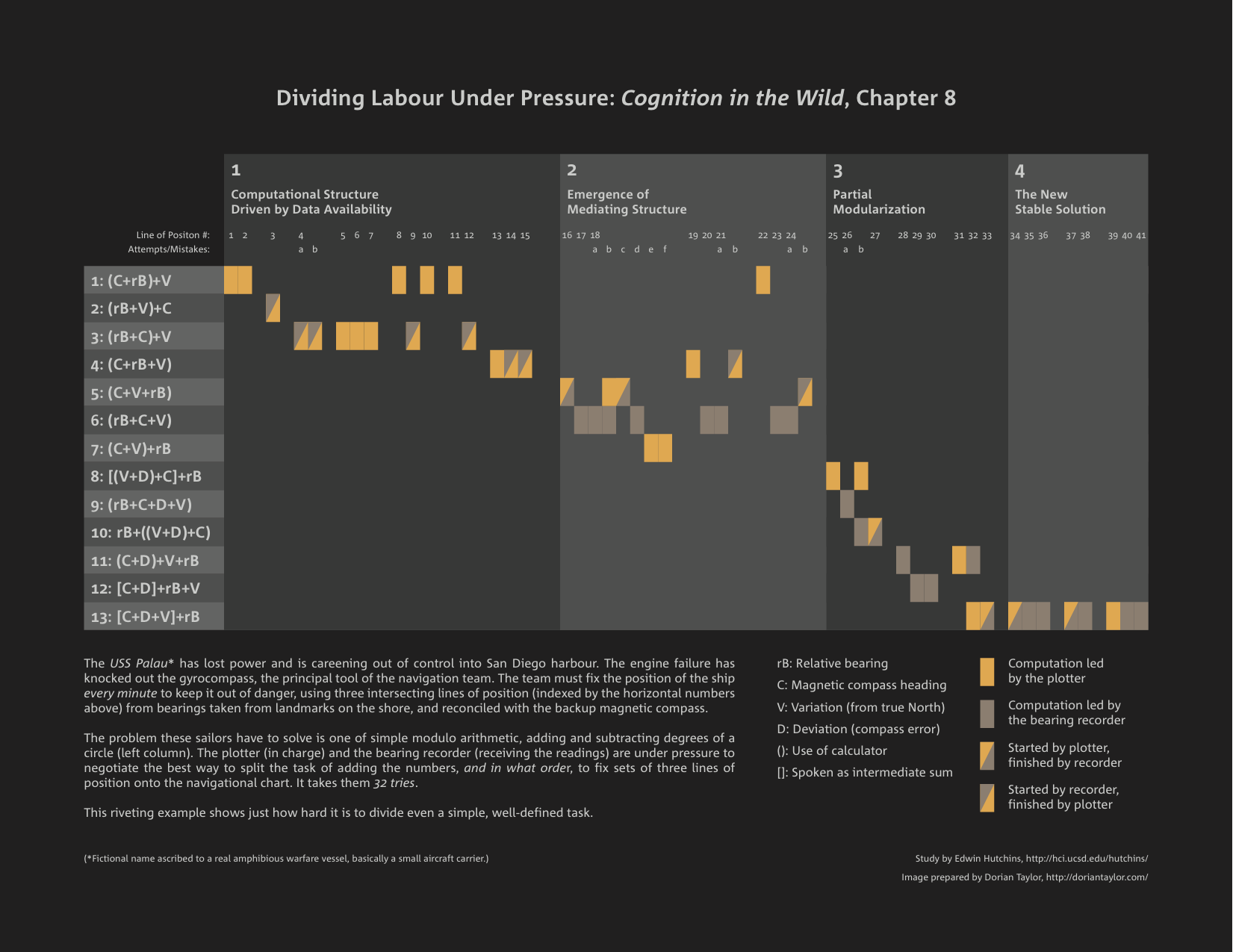

The following example is unparalleled in the demonstration of this problem:

What we're going to look at here is a close ethnographic study of the work of two members of the navigation team of an aircraft carrier✱.

The context is that the ship has just incurred an engine failure which has knocked most of the navigation instruments offline.

Moreover, at the time, the ship is effectively out of control and careening into San Diego harbour.

The two sailors in question have to fix the location of the ship so that it doesn't run aground or hit anything else in the water.

There are two other sailors out on the wings of the bridge with special telescopes, relaying the angles of landmarks relative to the ship by radio to the bearing recorder, who must generate a partial result to the plotter, who then plots the ship's position on the navigational chart.

Why this example is so poignant, to me, is that it is a real-world emergency where people could die, but the problem these sailors are trying to solve is an incredibly well-defined one of simple arithmetic.

What you're looking at is the time series of these two people attempting to negotiate an optimal division of labour that gets the lines on the chart in the most efficient way, so that extremely bad things don't happen.

The column of mathematical expressions on the left side represent the different attempts tried between the two sailors to divide the labour of fixing the ship's position. The horizontal plane, divided into four broad regions of progress, represents fixing the lines of position in one-minute increments.

As you can see, it takes them thirteen different combinations before they arrive at the optimal task decomposition, and thirty-two tries, plus a number of slip-ups, before they catch their groove.

Why I'm showing you this is to suggest that in our line of work, the problems that we're trying to divide labour around are orders of magnitude more complex than a simple arithmetic problem, and typically nobody's life is at stake.

Edwin Hutchins, the cognitive scientist who did this study, wrote that it was impractical that the US Navy include the optimal decomposition in their navigation manual, since it was a rare situation that in practice got resolved in a matter of minutes, but I think the opposite is true for us.

If anything, it points to the fact that should we come across an effective means of dividing a task, we should write it the hell down.

Now that most of the theory business is out of the way, I can get back to practical matters.

The user goal is not only an appropriate unit for production, but also for business.

When you think about it, a website is really just a membrane around a loose collection of responses to user goals—at least ideally.

Despite this, we're still doing commerce as if we're delivering a monolith of features, which naturally correspond to programming tasks.

What if we brought the user goal up to the surface of the commercial transaction?

What if, instead of building websites, we contract to solve user goals, one by one?

Or rather, what if we contract, on an ongoing basis, to generate a stack of user goals, against which the client can then allocate an annual budget for implementation?

In other words, what if we make a wholesale rhetorical shift that turns the process of Web development—in the eyes of the client—from a capital expense, like buying a new photocopier, to an operating expense, that generates a snowball of value over an arbitrarily long period of time?

Moreover, what if we could sell the results of user experience design as a valuable asset in itself, rather than just a means of getting code written?

We have a tendency to handwave at the ROI of UX at large, but individual user goals can be valuated extremely concretely.

- First, you look at its polarity: is it fundamentally a cost-saving measure, or a revenue-generating one?

- Then you look at its entailments: who or what are you going to have to haul in to make it happen?

- Then you look at the asset value of the entailments: what else can they be used for?

Finally, you organize the business arrangement and the project in terms of those user goals which you can solve and deploy today.

To put it another way, I'm suggesting we add a dimension to the project triangle, turning it into a tetrahedron: you can have it fast, cheap, and good, as long as you aren't too picky about what it is.

But the it here always refers to the solution of a concrete user goal which has some significance to the client.

It doesn't matter if it's the most valuable one, because fixating on a forecast of value neglects the cost in both time and money, which I've shown earlier to be completely unpredictable.

This is important, because this the way we introduce antifragility into the business—not just the process—of creative work.

In order to properly conceive an incremental process, and likewise to sell that process, we need to have a working mental model of the shape of how we create value.

Here I introduce a speculative mathematical model I developed to do just that.

All design is problem-solving, and all solved design problems are genuine, if mostly humble, innovations.

Therefore we can use the math of the economics of innovation to talk about the ROI of design.

The value of a new innovation (or design) is realized over time as an S-curve.

It starts off slow, then shoots up like the familiar hockey stick as people start to take it up, then tapers off as its utility is maximized.

The problem here is that we don't know in advance where that curve is going to max out, nor how steep the slope will be.

But we do know one thing: 90% of everything is crap.

What we may know colloquially as Sturgeon's law, or the Long Tail, is known more formally as the Pareto rule: A power-law curve where the biggest observations are orders of magnitude bigger than the majority of the sample population.

If we go in with the assumption that this is how the value of design and innovation plays out, we have something to work with.

Now: Take an individual practitioner, and have them do one job every day in just this way.

The only criterion is that it has something to do with the project, and that they get it done before they clock out for the evening.

If we imagine the uptake and the ultimate value of each of that person's contributions being governed by the Pareto rule, we can likewise imagine a bunch of S-curves that start when those results are deployed, with their origins beginning at the completion date, and their height and slope determined randomly, but following that same rule.

If we overlay those results, we'll get something like this:

Again, the curve starts to go up when the result is put to use, and stops at a random height of theoretical value.

As you can see, most of the curves are small, but there are a few large and one extremely large one.

If we imagine the value of those results to be cumulative, we'll get a wobbly curve that looks like this:

Now, let's say that person gets paid a certain amount every month for their work.

Now we just have to add those functions together and we'll get an idea of what the return looks like over time:

If we run this model over and over, we can see that for some runs it might take a while for the peaks to cross the zero line into a positive net return.

Needless to say we want the curve to get up over the line as soon as possible.

Sometimes the highest peak is realized early on, sometimes not at all. If we accept this model of production, we also have to communicate to our clients that it has its own set of risks.

It sounds counterintuitive, but I think the way to achieve the best results is to order the sequence of tasks by random, with the universe as the random source, and just following one's nose.

What I'm suggesting with this model is that we sell an investment in design on the terms of the overwhelming statistical likelihood that the more of it you do, the more the results accumulate, and the more insanely lucrative returns you'll get.

It's also worth recognizing that probabilistic business models like the publishing and music industries, as well as think tanks and venture capital, make exactly the same assumption.

I'm saying that as designers, we should market ourselves as probabilistic generators of value, rather than deterministic providers of specific deliverables.

Once again: If it isn't obvious, this model is entirely speculative. It's not meant to have any predictive power whatsoever. It merely exists to demonstrate the shape of the value created over time, by way of a generalized creative process.

Now is the point where I need to talk about the technical interventions that actually make this model possible.

It's fine to wax theoretical, but this method relies on getting useful content and interactive functionality into the hands of users, because that's what justifies you getting paid.

It's also important that we think about creating tools that fit how we want to work, rather than working to conform to our tools.

This goes back to the title of the talk, Dissolving the Redesign.

Any business that isn't brand new is almost certain to already have a website. That means they already have at least a logical place where you can put the finished solution do your individual user goals, if only there wasn't an existing website in the way.

Enter the scaffolding.

This is a piece of software infrastructure I wrote to address this particular problem.

Consider it as a baby step toward shaping our process—and our business—to respect the physical—that is to say non-negotiable—characteristics of information as a medium.

If we consider that most mature business entities already have websites, then we can consider that those websites tend to be a garbage dump of legacy technology products. I don't want to have to do surgery to some arcane CMS just to get a little widget online.

The way the scaffolding works is that you set up a new server with the scaffolding on it, and you give that server the same hostname as the old one. Now all requests going to the old site are going through the new one.

The scaffolding mechanism is extremely simple: if the new server can find a page on its own system to respond to a request, then it returns that. Otherwise, it fetches the page from the old website.

As that page from the old site comes through, the scaffolding strips off all the navigation, visual chrome and ancillary content, leaving the nugget of main content in the centre. It then slaps on a new template which you can make, initially, to mimic the old site. That way you can mix in new content and functionality, on the server side, at the sub-page level. Nobody, who isn't in the know, will be able to tell that any of this is happening.

Using this technique, it's possible to replace the client's old website piece by piece, without giving any thought about the technology stack currently in place.

Moreover, this reduces the idea of a launch from a nail-biter to a mere PR event, because you can user-test the new functionality for months if you want to, using the old visual design.

What I hope I got across today is the inherent mismatch between incumbent ways of doing business around making things for the Web, and the process of design necessary to do great work. I've offered a theoretical underpinning for an alternative, and demonstrated tools and techniques to help make that a reality. The buckshot of bullet points to take home goes as follows:

Thank you for your attention.